Hazards on the Causal Path

Three Ways to Say ‘Because’ in Survival Analysis

Data Science @ Personio

and Open Source Contributor @ PyMC

2026-06-14

Preliminaries

Who am I?

- I’m a data scientist at Personio

- Bayesian statistician,

- Reformed philosopher and logician.

- Website: https://nathanielf.github.io/

Link to my website

Code or it didn’t Happen

The full worked example can be found on my blog

“Causation is not a relation between events frozen in amber.

It is the process by which one thing makes another happen.”

The Pitch

Data scientists use causal inference every day.

But “causal inference” is not one thing. There are at least three schools, and they disagree about mediation.

This talk: build a model that works for all of them. Along the way, dissolve a philosophical puzzle.

Agenda

- Three ways to say “because” — and where they disagree

- Building a generative process model in PyMC

- Dissolving the cross-world counterfactual objection

Act I: Three Ways to Say “Because”

The Pre-emption Puzzle

Two assassins are hired. Assassin A poisons the coffee. Assassin B waits with a sniper rifle as backup.

A’s poison works. B never fires. The target dies.

Counterfactual test: “Would the target have survived if A hadn’t acted?”

No — B would have fired. So A fails the counterfactual test.

But A clearly caused the death.

School 1: Potential Outcomes

The Framework

- Rubin, Holland (1986): “No causation without manipulation”

- Cause = difference between potential outcomes: \(Y(1) - Y(0)\)

- Clean, experiment-centric

- The gold standard for average treatment effects

The Limitation

- Mediation requires \(Y(1, M(0))\)

- “What would your outcome be under treatment, if your mediator took the value it would have had under control?”

- This nests two worlds

- Many PO theorists reject this as ill-defined

School 2: Structural Causal Models

The Framework

- Pearl (2001): DAGs + structural equations + do-calculus

- Allows cross-world counterfactuals via structural equations

- \(\text{NDE} = E[Y(1, M(0))] - E[Y(0, M(0))]\) is well-defined

- Mediation is a first-class citizen

The Cost

- Requires commitment to structural equations as real

- The cross-world counterfactual remains philosophically contentious

- “Your structural equations had better be right”

School 3: Process / Aalen

Aalen (1987, 1989): Causation as unfolding temporal process.

Effects are increments to risk over time, not static comparisons between frozen worlds.

The causal story is told by the trajectory, not the endpoint.

Time is the medium, not just a container.

This is where survival analysis lives naturally. And it sidesteps the cross-world problem entirely.

The Stalemate

| Potential Outcomes | Pearl SCM | Process (Aalen) | |

|---|---|---|---|

| Cause is… | Difference between worlds | Structural mechanism | Unfolding process |

| Mediation? | Ill-defined (cross-world) | Well-defined (structural eqns) | Naturally decomposable |

| Strength | Clean identification | Full causal calculus | Temporal dynamics |

| Weakness | No mediation | Structural faith | No marginal estimand? |

What if one model could give you all three rows of the last column?

Back to Earth: A Concrete Problem

A patient starts an exercise programme.

It reduces cardiovascular risk directly. Good.

But it also causes joint inflammation. The inflammation increases risk over time.

At the six-month endpoint, the treatment looks modestly protective.

You have no idea it’s being undermined.

Three schools, three different things they can tell you about this situation.

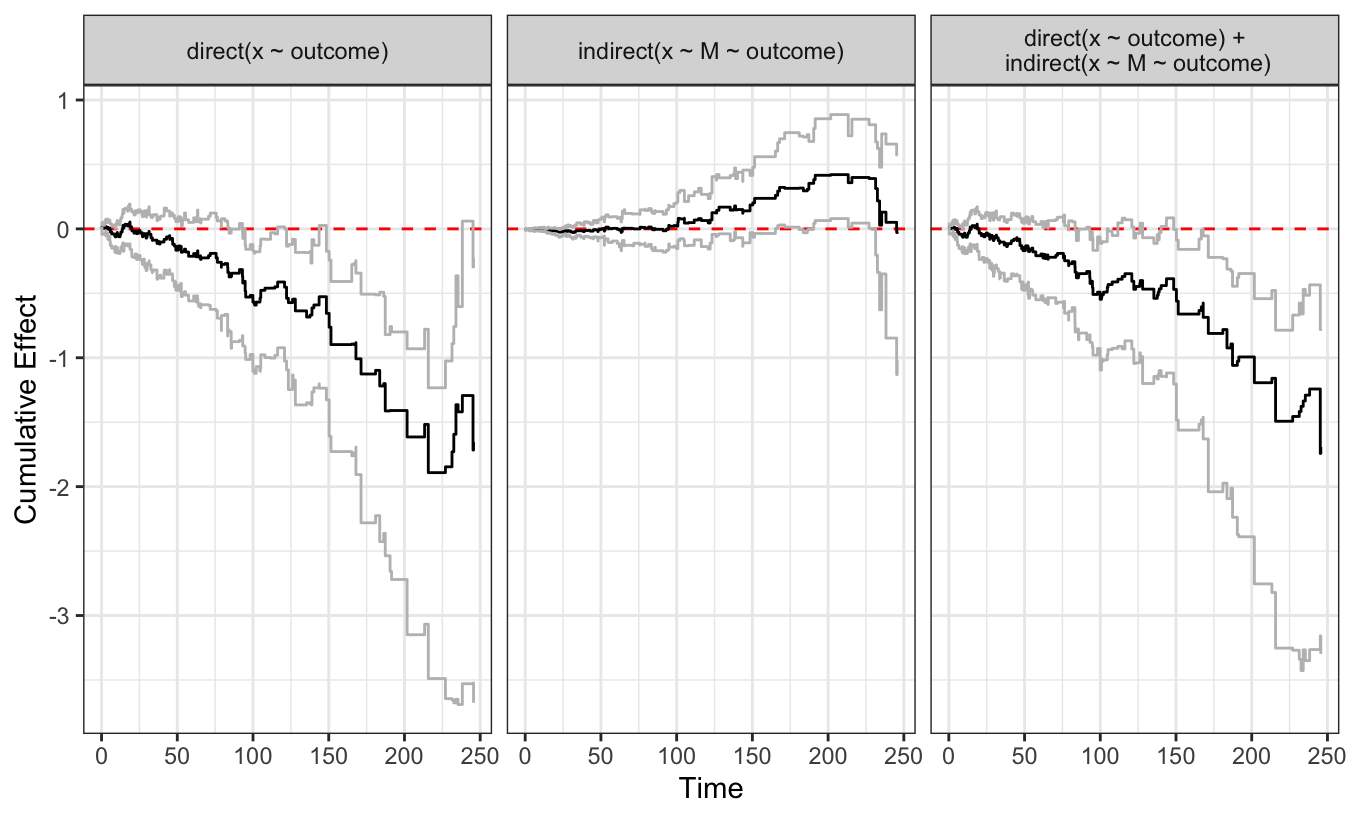

What the Decomposition Reveals

Three panels. Three stories your endpoint missed.

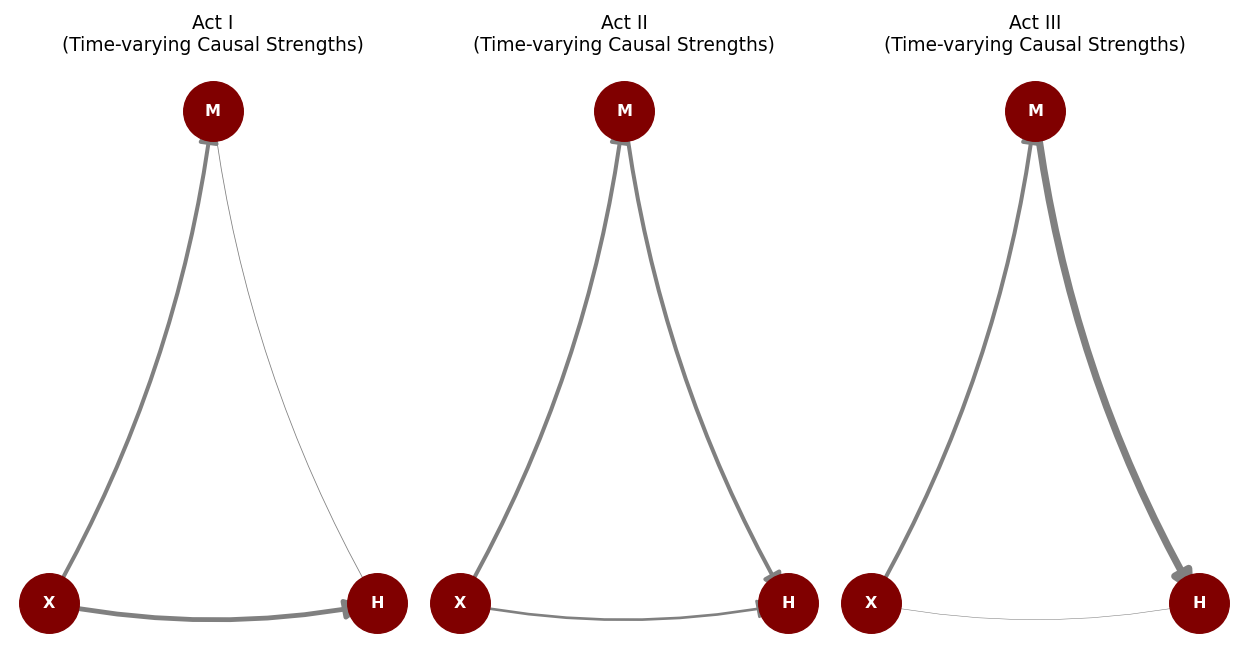

The Structure: A Sequence of DAGs

The arrows don’t just exist. They strengthen and weaken over time.

Act II: Building the Process Model

Why a Generative Model?

The process view demands a model that generates trajectories, not just estimates coefficients.

A partial likelihood (Cox) conditions out time. We need time in.

A full Poisson likelihood gives us: explicit baseline hazard, time-varying coefficients, and the ability to simulate counterfactual worlds.

Three technical moves: discretise, smooth, bridge.

Discretise + Smooth

Discretise time into bins

# Each row: who was at risk, how long, what happened

df["dt"] = df["stop"] - df["start"]

bin_idx = df["bin_idx"].valuesTime is no longer background noise. It’s a covariate.

Smooth with B-splines

# Random walk prior on spline coefficients

beta_raw = pm.Normal("beta_raw", 0, 1,

dims=('splines', 'tv'))

coef_alpha = pt.cumsum(beta_raw * sigma_smooth,

axis=0)

alpha_1_t = pt.dot(basis, coef_alpha[:, 1])The cumulative sum is a random walk. The data decides how much adjacent coefficients differ.

The Poisson Bridge

\[d_{it} \sim \text{Poisson}(\lambda(t)), \quad \lambda(t) = Y_{it} \cdot \Delta t \cdot f(\text{linear predictor})\]

Binary events are counts capped at 1.

The baseline hazard becomes an explicit parameter, not a nuisance to be conditioned away.

This is what makes the model generative. We can sample from it. We can intervene on it.

The Joint Model

Mediator equation:

\[M_t = \beta(t) \cdot X + \rho \cdot M_{t-1} + \epsilon\]

The mediator has inertia. Its dynamics are modelled, not assumed away.

Hazard equation:

\[\lambda(t) = f\big(\alpha_0(t) + \alpha_1(t) X + \alpha_2(t) M_{t-1}\big)\]

Direct and indirect paths, both time-varying.

These are estimated simultaneously. One likelihood, one posterior.

This is a structural equation model in Pearl’s sense, with time-varying coefficients in Aalen’s sense.

The Softplus Link

\[f(x) = \ln(1 + \exp(x))\]

Ensures the hazard is always positive. Smooth. Differentiable. MCMC-friendly.

This is not Aalen’s identity link. The path decomposition is approximate on the hazard scale.

That’s fine. The decomposition is diagnostic. The causal estimand lives elsewhere.

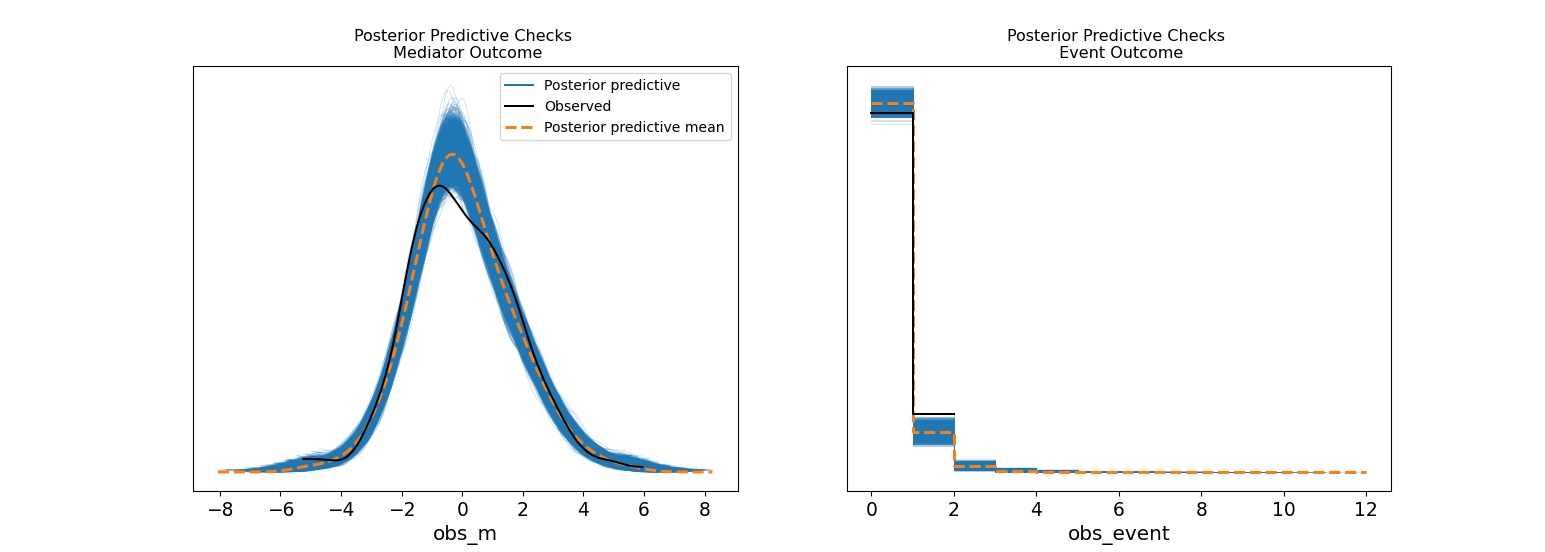

Diagnostics: Does the Model Fit?

Left: mediator model fits. Right: event model fits. The generative model is internally coherent.

Act III: Dissolving the Puzzle

The Path Decomposition

These time-varying coefficients show which channel dominates when:

- Direct effect: protective throughout

- Indirect effect: inert, then harmful after day 100

- Total effect: attenuated

This is the model’s internal mechanics.

The structure of mediation, not the magnitude.

But mechanism is not yet an estimand. The potential outcomes school is unimpressed.

G-Computation: Two Counterfactual Worlds

# World 1: everyone treated

pm.set_data({'trt': np.ones(n)})

idata_trt1 = pm.sample_posterior_predictive(

idata, var_names=['Lambda', 'obs_m', 'obs_event'])

# World 0: nobody treated

pm.set_data({'trt': np.zeros(n)})

idata_trt0 = pm.sample_posterior_predictive(

idata, var_names=['Lambda', 'obs_m', 'obs_event'])Two counterfactual worlds. Same population. Same model.

The mediator evolves naturally under each intervention.

The Survival Contrast

The causal estimand:

\[\bar{S}_{\text{treated}}(t) - \bar{S}_{\text{control}}(t)\]

Computed by:

- Individual hazard trajectories for each posterior draw

- Transform to survival: \(S_i(t) = \exp(-\Lambda_i(t))\)

- Average across individuals

- Compare

Defined interventionally.

Estimated processually.

Full posterior uncertainty.

The NDE requires \(Y(1, M(0))\). This nests two worlds.

. . .

The potential outcomes school says: “Ill-defined.”

. . .

Pearl says: “Well-defined, via structural equations.”

. . .

The process model says: “I just simulated it.”

NDE from the Process Model

# Mediator trajectories under control (the "natural" M(0))

m_nat = idata_trt0.posterior_predictive['obs_m']

# Hazard under treatment, but with control mediator

eta_nde = (alpha_0(t) + alpha_1(t) * 1

+ alpha_2(t) * m_nat)

# Transform through the link and simulate

hazard_nde = dt * log1pexp(eta_nde)The cross-world counterfactual is just plugging one simulation’s mediator into another simulation’s hazard.

~25% risk reduction via direct path alone. The mediator claws back ~8%.

The same model generates both counterfactual worlds.

. . .

Modeling choices affect both equally.

. . .

What survives in the difference is the causal signal.

What the Practitioner Does

The treatment reduces events by ~17% overall.

But: the direct effect gives ~25% reduction. The mediator claws back ~8%.

The next intervention targets the mediator after day 100.

Without the decomposition, you’d report “modest benefit” and stop.

With it, you know exactly where to push next.

When to Use What

If you need total effects and have a clean experiment:

Potential outcomes + Cox is fine.

If you need mediation but effects are time-constant:

Pearl’s mediation formulas work.

If effects change over time and you suspect a mediator whose influence evolves:

Dynamic path analysis gives you the process and the estimands.

How to tell: plot Schoenfeld residuals. If they trend, you have time-varying effects. If you also have a candidate mediator, you have a dynamic path problem.

What We Built

1. A Bayesian dynamic path analysis in PyMC. A generative model of the causal process, with full posterior uncertainty.

2. G-computation that converts process to estimand — including NDE/NIE — without requiring philosophical commitment to cross-world counterfactuals.

3. A practical resolution of a real philosophical debate. The generative model dissolves the objection by making the counterfactual concrete.

The process tells you why.

The counterfactual tells you how much.

The generative model tells you both.

Thank You

Resources

- Blog post: nathanielf.github.io

- PyMC: pymc.io

- Fosen et al. (2006), “Dynamic path analysis,” Lifetime Data Analysis

- Aalen (1989), “A linear regression model for the analysis of life times,” Statistics in Medicine

- Holland (1986), “Statistics and Causal Inference,” JASA

- Pearl (2001), “Direct and Indirect Effects,” UAI

- Robins & Richardson (2010), “Alternative graphical causal models”

Three Ways to Say ‘Because’ | PyData London 2026